Pluxemburg.com

Pluxemburg.com is the website for Swedish technotronica band Pluxus and the record label Pluxemburg they run together with Jonas Sevenius and us (Martin Str?m and Peter Str?m).

Pluxemburg has since its launch in 2000 gone from a small independent label with only one artist “Pluxus” to bigger with several artists (and grammy awards!) and then back to again being a quite small label. The 2009 version of Pluxemburg focuses mainly on Pluxus and Pluxus related projects.

A small record label needs to not only work on the websites of their artists and the label itself, but also constantly make sure that the info on the many online forums and communities are up to date and accurate. There needs to be Facebook and Myspace pages, last.fm events, discogs.com entries and so on. Updating these websites as soon as something happens (gigs, releases, parties, videos, etc) is time consuming and unfortunatley often ends up in a copy paste manner (spamming) rather than using the different forums for their different purposes.

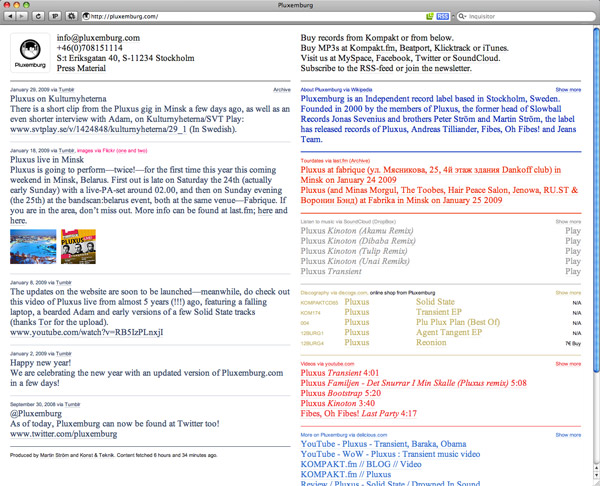

When thinking about these conditions in connection to building a new Pluxemburg website, we realized that instead of constantly keeping the Pluxemburg and Pluxus websites updated, we should make a website that mirrors the contents of each community and instead spend our time keeping these communities updated. The information for each part of the new Pluxemburg.com is therefor spread out on the community where it “belongs”? the best. The discography is mirrored from Discogs, the events from Last.fm, videos from YouTube, information from Wikipedia, links from Delicious and sounds from SoundCloud. We use the popular tumblelog Tumblr for the newsfeed, adding not only a tool for keeping our website updated but a small community in itself too. All colors used on Pluxemburg.com are based on the “corporate”? colors of each community, making also the design a mirror of each community. There is even a Twitter-feed that mirrors everything that goes on on Pluxemburg.com, somehow making the site into a complete loop.

Technically, every day a pretty straight-forward Ruby script will automatically run on our server to update the information from all sources and then sends it (together with a security token to make sure the data isn’t messed with) to a PHP script at pluxemburg.com. The PHP script then processes (cleans and validates) the data and stores it in a file as serialized PHP, which is used by the index page to present the information.